Datasets = Artistic Material

In early 2020, before the widespread adoption of large language models like ChatGPT and text-to-image generators like Midjourney, I founded the UVM Art and AI Research Group, and I became one of the only faculty from the arts and humanities working in AI at my university.

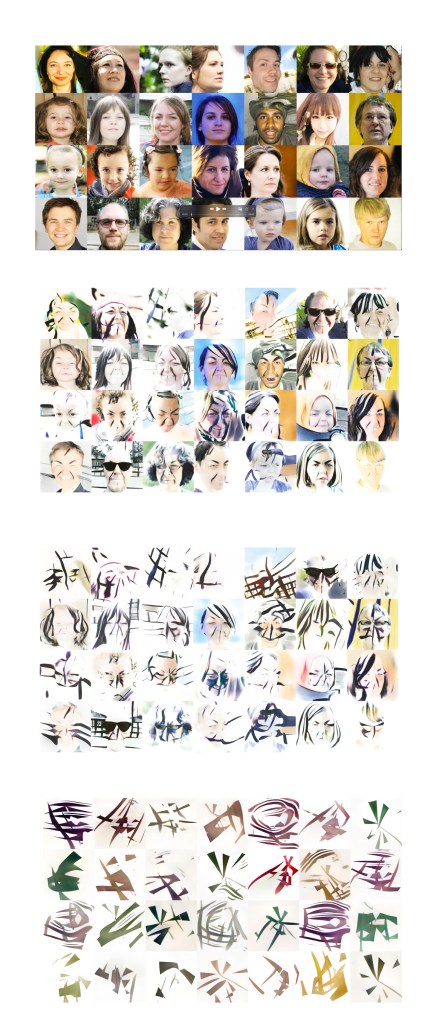

Initially, I was interested in how machine learning might serve my art practice and its inquiries into systems, rules, and power structures. A National Science Foundation grant provided the support of two graduate students and access to the Vermont Advanced Computing Center (VACC), a high-performance computing (HPC) facility on campus equipped with advanced computational resources designed to perform highly intensive tasks. We decided to work with generative adversarial networks (GANs) because they were helpful in visual pattern recognition, and we could work with them remotely through the pandemic by sending commands and computations to the VACC. At the time, Artists were using Nvidia’s StyleGAN, which gained widespread recognition for generating realistic faces of non-existent people after being trained on a dataset of 70,000 human portraits, the Flickr-Faces-HQ Dataset. We also designed a genetic algorithm, a machine-learning computer program that solves problems by mimicking the process of Darwinian natural selection. We customized the code for this model and could run it on our laptop computers.

At the forefront of our inquiry, we set out to master and interrogate these machine-learning models. Our humanistic concerns with authorship, ownership, artistic intent, ethics and bias, appropriation, provenance, and environmental sustainability set us apart from researchers working with the tools to solve specific problems. We quickly discovered that even computer scientists struggle to understand how neural networks make decisions, that scientific papers would not answer our humanities- and arts-based questions, and that the best way to know how machine learning worked was to work with it hands-on. Understanding that the image datasets used to train AI models must be taken seriously led to what is now at the core of my art practice: the artist-made dataset. This understanding also informs our hashtag #ownyourdataset. The research has further evolved to focus on AI epistemology and conventions that harm people and conflict with environmental sustainability. In addition to our work with datasets, we are committed to understanding how AI models work and to revealing their computation, which is too often personified and equated with human thinking.

Vibrant collaborations with computer science and engineering faculty contribute to the meaningful outcomes of our research. I’ve learned (and continue to learn how) to navigate and push back against the mechanical and scientific certitude of the STEM disciplines while finding common ground with STEM scholars. On a basic level, we share UVM’s Our Common Ground values, and more broadly, we share concern for the well-being of people and the planet.

The disruptive impact and potential harms of AI are inherently linked to training data. Foregrounding Artist Opinions: A Survey Study on Transparency, Ownership, and Fairness in AI Generative Art, a recent survey study I co-authored with Juniper Lovato and others, revealed that while artists’ opinions on AI’s uses varied, over 80% of participants agreed that full and legal disclosure should be required regarding the art and images used to train AI models.

In my work, I view datasets as comprising thousands of individual pieces, much like an artists series. Building a large dataset is akin to developing such a series, where each part contributes to a broader theme. By creating datasets rooted in artistic exploration, artists can unlock AI’s positive possibilities and establish a solid, long-term base for their inquiries. This method has been particularly impactful for my students. Since 2020, I’ve created various datasets. My current focus is on The Damaged Leaf Dataset. Audiences connect to this work as it stands independently, regardless of how I use it in machine learning experiments.

Like the artists Vera Molnár and John Cage, I am interested in experimentation and iteration. As I work toward a solo show set for the early months of 2025, experimentation continues with The Damaged Leaf Dataset; my current portfolio is immersed in the botanical aesthetics of the Vermont landscape; it follows that my portfolio challenges the urban-biased aesthetic that dominates contemporary art. Along these lines and with an interest in biomimicry, my team’s inquiry has evolved to challenge the built architectures of machine learning models; we are currently developing a model based on algorithms that imitate botanical life. Choreographer and dancer Julian Barnett collaborates with us on a eulogy for trees that did not survive Vermont’s Lymantria dispar outbreak. An additional team project trains the machine learning model CycleGAN, a GAN that can work with two datasets, using The Damaged Leaf Dataset and a healthy Northern Red Oak leaf dataset to symbolically heal the damaged leaves.

The Damaged Leaf Dataset (DLD)

The Damaged Leaf Dataset (DLD) is a symbolic expression of an ecosystem out of balance. Its forms are the result of an outbreak of Lymantria dispar caterpillars that defoliated the forests of Northern Vermont in the summer months of 2021 and 2022. It expresses a patterned conflict between the Earth’s soft, fleshy animal, human, and plant bodies and the misgivings of hard mechanical certainty. It serves to bridge an alliance between the machine world and the natural world.

Machine bodies are made of Earth metals and run on fossil fuels; Gaia, the Earth, is the machine’s maker and master. Designed by humans who have forgotten their own reliance on the plant world to live and breathe, machines and machine logic are partitioned from the natural world. Does the intelligent machine know that its fate is determined by a habitable Earth?

As a growing archive of messages and symbols that appear as majestical creatures, cartographies, and unconscious associations, the DLD is information. When fed to artificial intelligence and fabricated by industrial machines, its meanings are imprinted in machine memory. Might this sharing of knowledge foster a machine intelligence that is informed by botanical understanding? Might it raise human expectations for how our technology should contribute to human and environmental well-being? Might it translate as an unearthed sublime?

This dataset includes 5000+ specimens.

Studio assistants: Maya Griffith, Liv Welford, Jason Stillerman, Syd Culbert, Giovan Lowry

The Damaged Leaf Dataset Stories and Compositions

The Full Red Oak Dataset (FROD)

This dataset serves as a control for the Damaged Leaf Dataset, it includes 2000+ specimens.

Studio Assistants: Maya Griffith, Liv Welford.

The Athena Dataset

The Athena Dataset deconstructs the centralized concentrated power that is symbolized by tower architecture. In its simplest form, the tower is an octagon shape topped by eight slanting triangles that meet at a center point in the sky, a place of one-pointed knowing and privilege.

Each glyph in the Athena Dataset is a flattening of the tower’s hierarchy. Its irregular triangles and octagon parts are scrambled and reassembled into new energetic circuits. When a tower falls and breaks into bits, the positive possibilities are infinite. This series is a joyful expression of iteration, resistance, imagination, and optimism.

The dataset is named for the Greek goddess Athena, who oversees warfare, wisdom, and the arts. As a working dataset, Athena deconstructs and makes transparent the power and perspectives of the technological systems it moves through.

This dataset includes approximately 400 original circuits and thousands more made with a genetic algorithm.

Athena Dataset compositions

Studio Assistants: Cameron Bremner, Ethan Davis, Emma Garvey, Oliver Hamburger, Anna Hulse, Sarah Pell, Veronika Potter, Fred Sanford, Chauzhen Wu, Yifeng Wei

Tiny Datasets

Tiny Datasets

The Tiny Dataset series celebrates its local, limited, situated, chaotic, and precise results. These compositions are made by the UVM Art + AI Research Group’s genetic algorithm, a locally made meaningful machine. Each dataset is includes no more than 12 shapes.